|

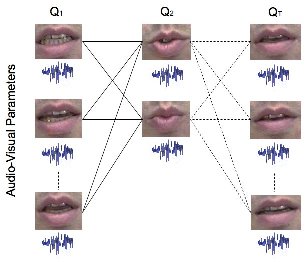

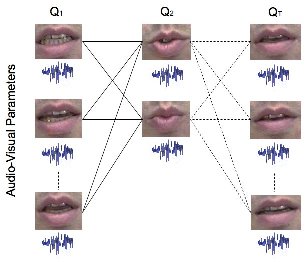

Video Realistic Talking Head:We are working on various aspects of facial analysis/synthesis, both in 2D and 3D. Our early work was concerned with driving the

facial appearance from audio input alone, i.e. "talking heads". We extended basic ideas from standard (eigenmodel based) Active Appearance Models to

work with audio features as well as shape and texture features.

Taking ideas from Tracking People in 3D (above) the basic flat active appearance model was also modified to use a hierarchy, which enabled better control of the model.

Key Paper: Speech-driven facial animation using a hierarchical model (PDF), D.P. Cosker, A.D. Marshall, P.L. Rosin, Y.A. Hicks, IEE Proc. Vision, Image and Signal Processing, vol. 151, no. 4, pp. 314-321, 2004.

Videos: Example_1, Example_2 (both example videos generated using only speech),

Tracking_Example

|

|

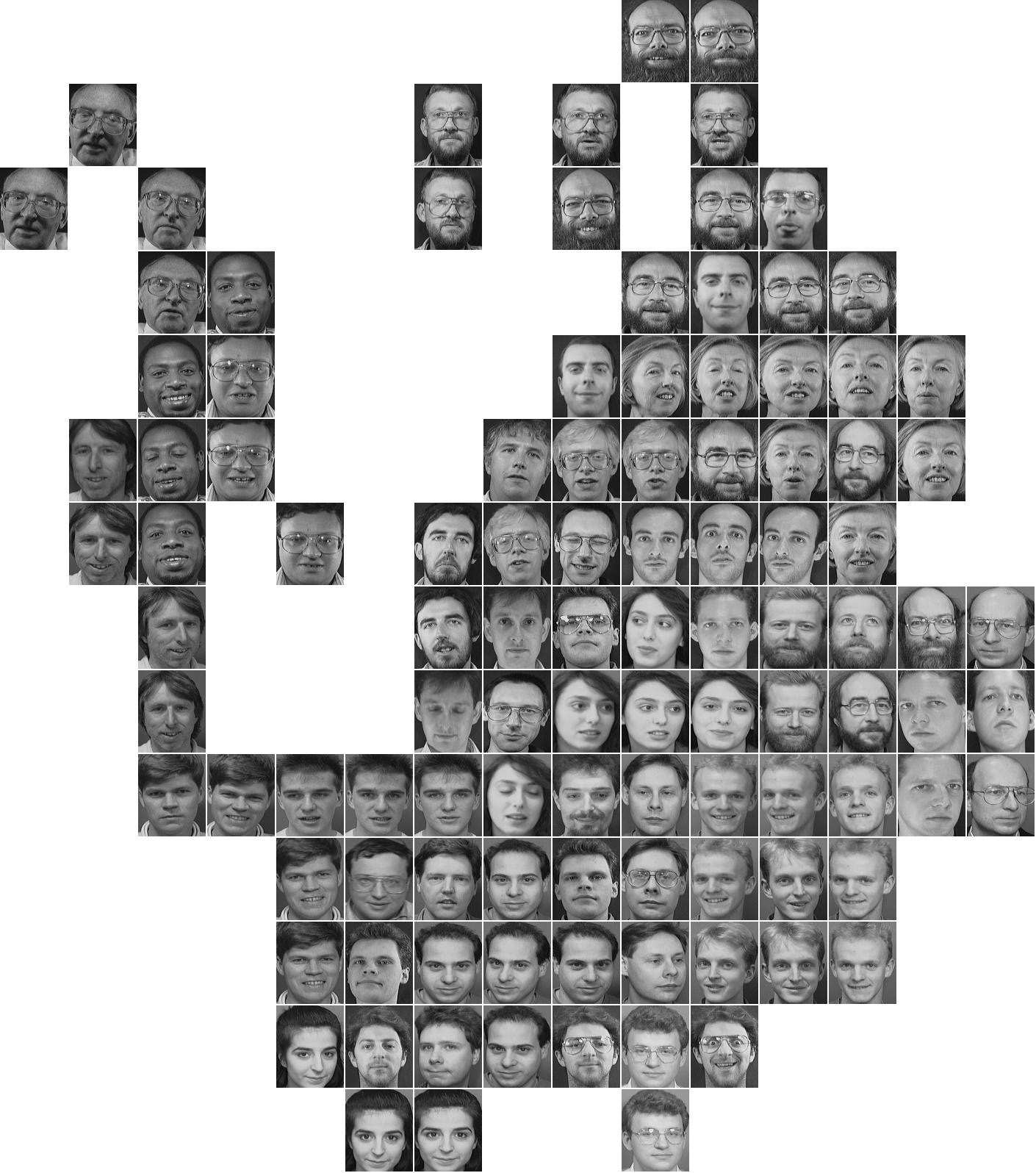

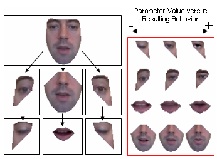

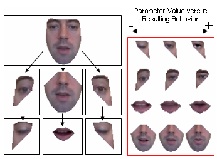

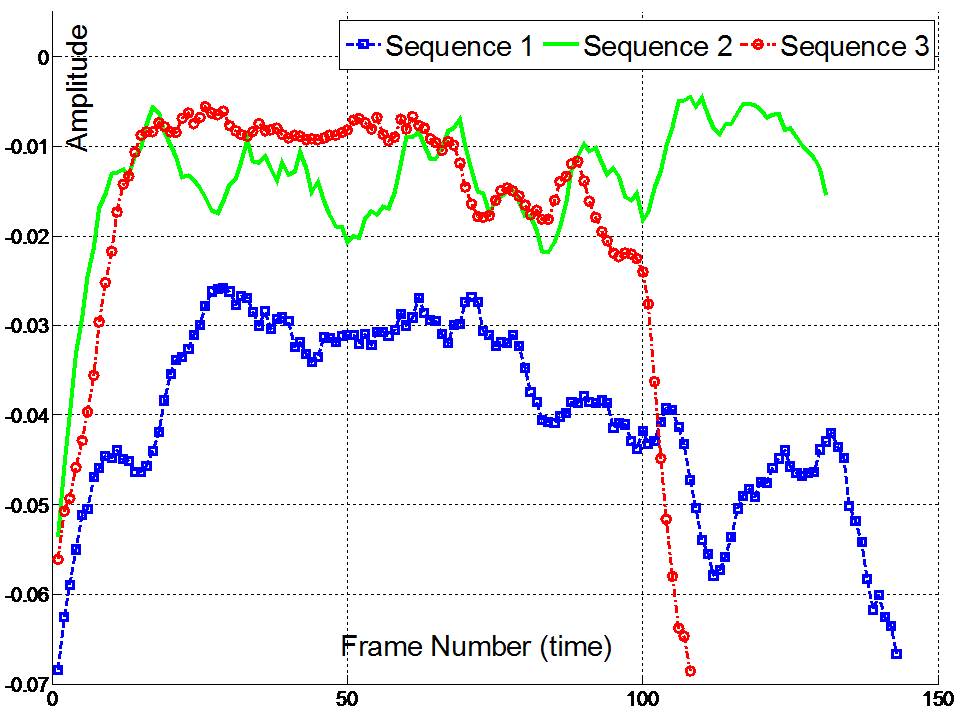

Facial Dynamics: A major theme of my research in recent years is the modelling of facial dynamics model. Taking the hierarchical face decomposition

(above) further we can model the dynamics of

each region by sampling the eigenvalues for the principal eigenvectors that span the region. Since the top principal components represent the greatest variation we

can represent the dynamics with a few (often a single) parameters. (See below for more examples).

We have applied this technique to a few applications including animating a talking head - e.g. inserting artificial blinks (see above), behaviour transfer

between two talking head models, the evaluation of the psychological perception of smiles, developing a 3D facial biometric and dental applications of 3D facial dynamics.

|

|

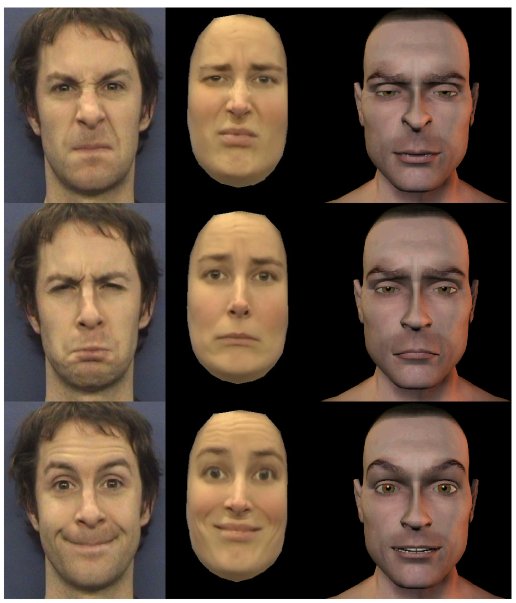

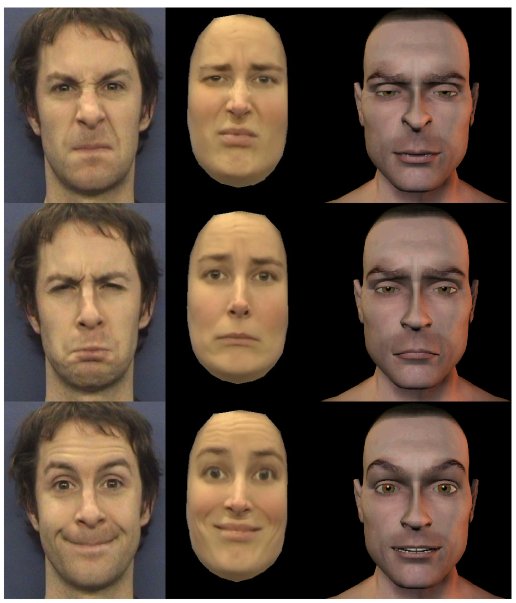

Facial Behaviour Transfer: We have developed a method for re-mapping animation parameters between multiple types of facial model for performance driven

animation. A facial performance can be analysed in terms of a set of facial action parameter trajectories using a modified appearance model with

modes of variation encoding specific facial actions which we can pre-define. These parameters can then be used to animate other modified appearance

models or 3D morph-target based facial models. Thus, the animation parameters analysed from the video performance may be re-used to animate

multiple types of facial model.

Key Paper: Towards Automatic Performance Driven Animation Between Multiple Types of Facial Model (PDF), D. Cosker, R. Borkett, D. Marshall and P. L. Rosin, "", IET Computer Vision, Vol. 2, No. 3, pages 129-141. 2008.

Videos: Overview, Simple_Morph_Target_Mapping_Example

|

|

|

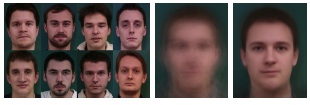

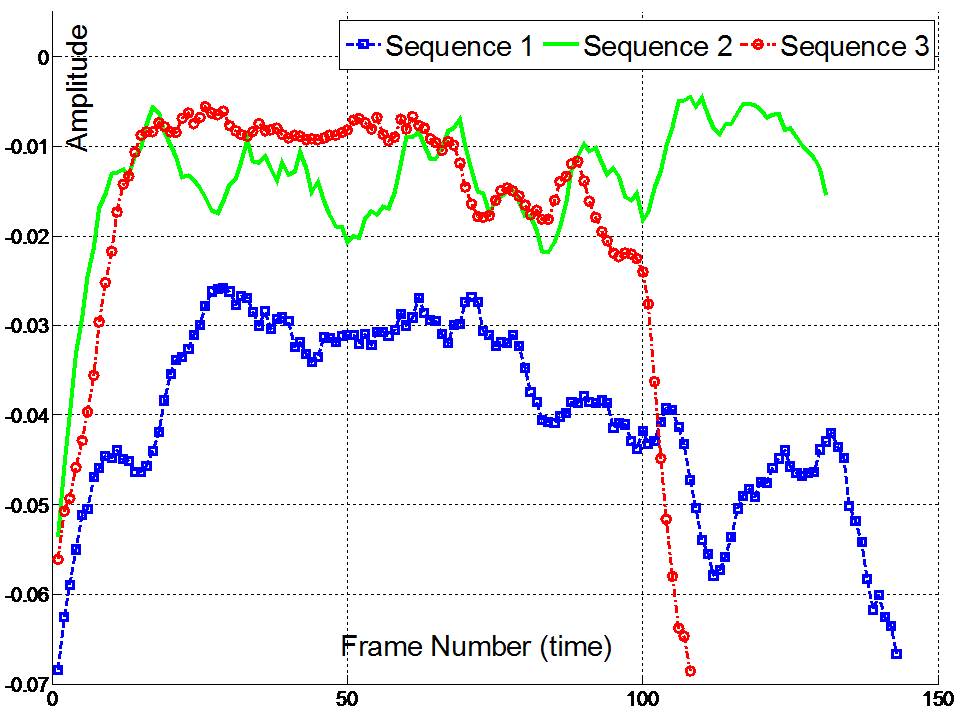

Emotion and Expressive Facial Dynamics: Our face model has been applied to synthesise stimuli for psychological experiments.

The images below show how a single parameter of the model (the current value given by the red dot in images opposite) can be used to vary the smile. We can then model the temporal onset, apex and

offset of the smile and furthermore vary the amplitude/intensity of the smile by the strength of the principal components.

This was used to determine how the temporal dynamics affected the perception of a smile as genuine or fake.

The work with our internationally renowned School of Psychology

benefitted both Schools' research. We provided the most realistic stimulus models available for their experiments

and their large experimental testbed not only provided results for the Psychology experiments but for our own (computer science) evaluations of our facial models.

Key Papers:

Effects of Dynamic Attributes of Smiles in Human and Synthetic Faces: A Simulated Job Interview Setting (PDF), E. Krumhuber, A. Manstead, D. Cosker, D. Marshall and P. L. Rosin,

Journal of Non-Verbal Behaviour, vol. 33, no. 1, pp. 1-15, 2009.

Facial Dynamics as Indicators of Trustworthiness and Cooperative Behaviour (PDF), E. Krumhuber, A. Manstead, D. Cosker, P.L.Rosin and A.D. Marshall, Emotion, Vol 7, No 4, pp 730-735, 2007.

Videos: Overview. Smile_Analysis - this curve is then manipulated to create 'fake' or 'genuine' smiles.

|

|

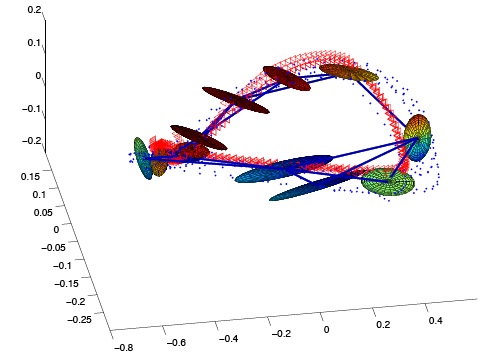

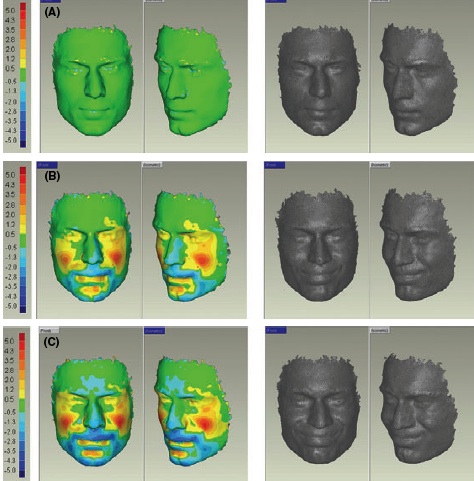

3D Facial Biometrics: The human face has been so far mainly seen as a

physiological biometric and very little work has been done to

exploit the idiosyncrasies of facial gestures for person identification.

We proposed a markerless method to capture 3D

facial motions, and investigates a number of pattern matching

techniques which operate accurately on very short facial actions.

Qualitative and quantitative evaluations are performed for both

the face identification and the face verification problems. The

emphasis is placed on designing a system which is not only

accurate but also usable in a real-life scenario.

Suitable data processing and feature extraction methods

are examined, then a number of pattern matching techniques including

the Frechet distance, Correlation Coefficients, Hidden-Markov Models, Dynamic

Time Warping and its derived forms are compared, in light of which an

improved Weighted Hybrid Derivative Dynamic Time Warping (WDTW) algorithm is proposed.

Key Paper: Assessing the Uniqueness and Permanence of Facial Actions for Use in Biometric Applications , L. Benedikt, D. Cosker, D. Marshall and P. L. Rosin, IEEE Transactions on Systems, Man and Cybernetics - Part A: Systems and Humans, 2009 (in press). BTAS 2008 conference version of paper (PDF)

|

|

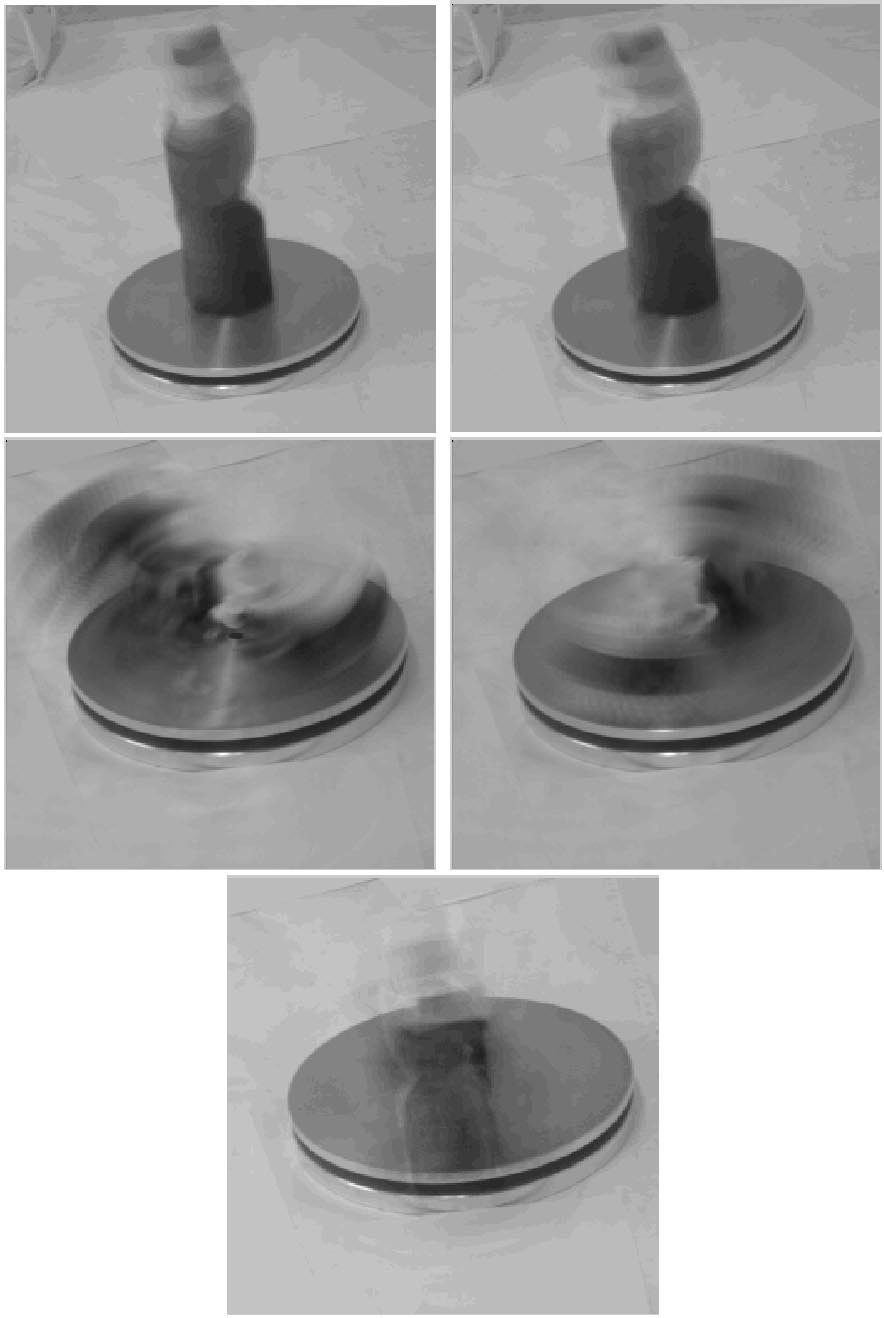

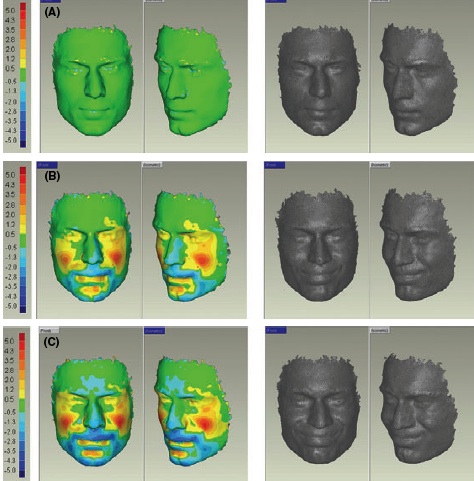

Dental Applications: Craniofacial motion analysis: Craniofacial assessment for diagnosis, treatment planning and outcome of the facial structure. Evaluation and quantification of

facial movement however is becoming particularly

important, for example in children undergoing facial

surgery (cleft lip and palate), in the assessment of

patients with motor deficits (facial nerve palsies) and in

the evaluation of psychomotor function associated with

depression or pain.

Until recently, the only tools available for the evaluation

of facial function movements were based on

subjective scaling assessments or two-dimensional

measurements . Subjective assessments have

the drawback that they are based on scales that are

discontinuous and ambiguous and although two-dimensional

measurements are objective, studies have

cast doubt on their validity.

This study represents experimental work that

centres on a novel, non-invasive imaging system

capable of three-dimensional, soft tissue image capture

during facial movement. The methodology

behind image capture is outlined and facial movement

is assessed in response to facial expression and

spoken word.

Key Papers:

Three-dimensional motion analysis - an exploratory study. Part 1: Assessment of facial movement (PDF),

H. Popat, S. Richmond, R. Playle, D. Marshall, P.L. Rosin and D. Cosker

Orthodontics and Craniofacial Research, Vol. 11, pp. 216-223, 2008.

Three-dimensional motion analysis - an exploratory study. Part 2. Reproducibility of facial movement (PDF),

H. Popat, S. Richmond, R. Playle, D. Marshall, P.L. Rosin and D. Cosker

Orthodontics and Craniofacial Research, Vol. 11, pp. 224-228, 2008.

|